Gesture control

Hint

The operating environment and software and hardware configurations are as follows:

- OriginBot robot(Visual Version/Navigation Version)

- PC:Ubuntu (≥20.04) + ROS2 (≥Foxy)

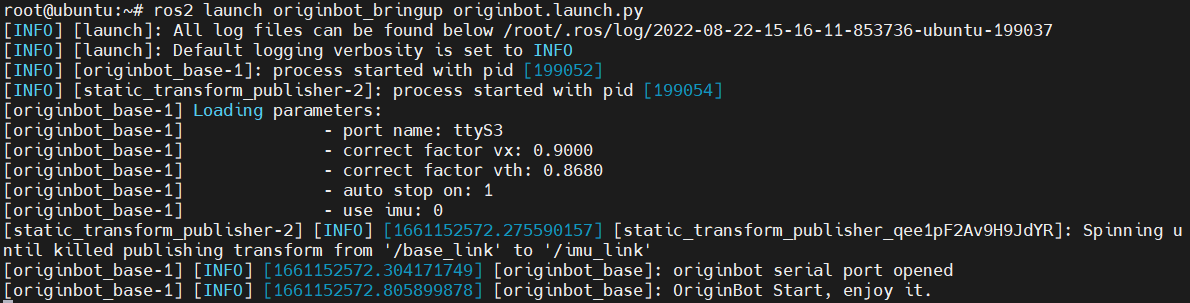

Start the robot chassis

After the SSH connection to OriginBot is successful, enter the following command in the terminal to start the robot chassis:

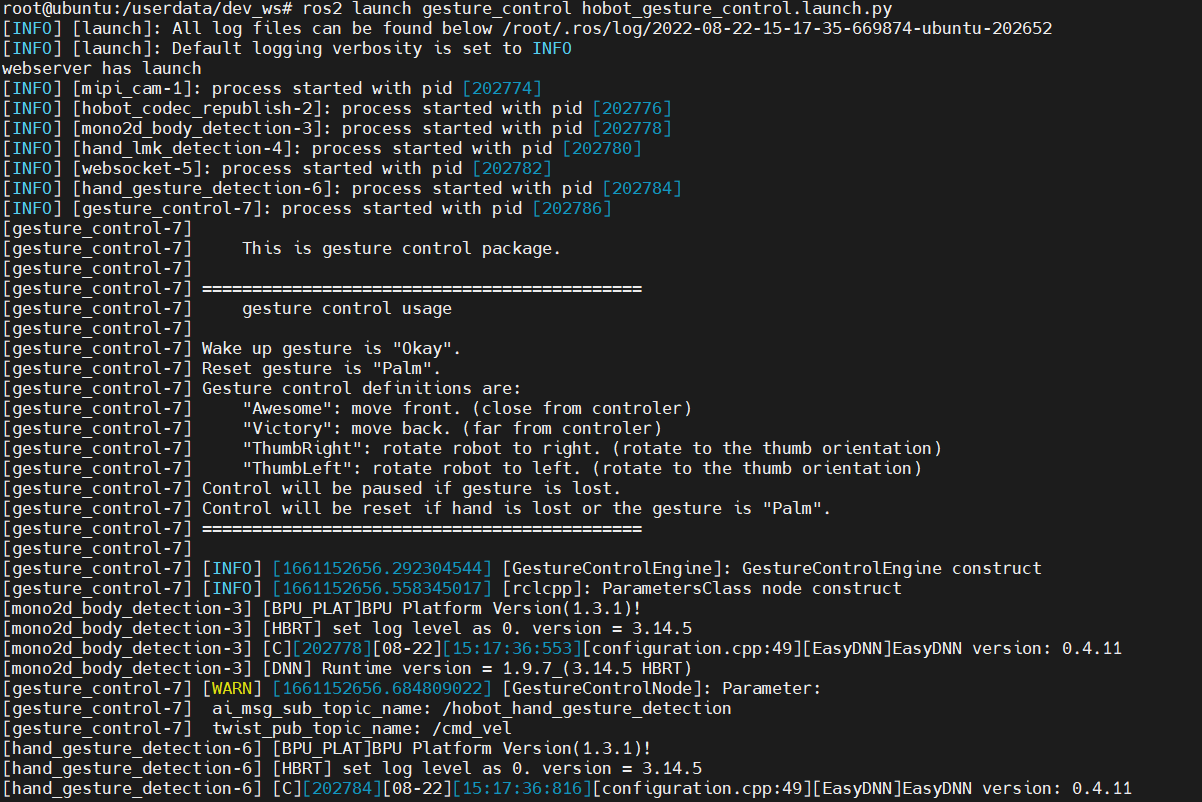

Enable the gesture control function

$ cd /userdata/dev_ws

# Copy the configuration files needed to run the example from the TogetheROS installation path

$ cp -r /opt/tros/lib/mono2d_body_detection/config/ .

$ cp -r /opt/tros/lib/hand_lmk_detection/config/ .

$ cp -r /opt/tros/lib/hand_gesture_detection/config/ .

# Start the launch file

$ ros2 launch gesture_control hobot_gesture_control.launch.py

Attention

When starting the application function, please pay attention to the configuration file under the current running path, otherwise the application function cannot find the configuration file and will fail to run.

Gestures control bot effects

After the startup is successful, stand in front of the OriginBot camera and use the following gestures to control the robot's movement.

Visualized display of the upper computer

Open the browser and access the IP address of the robot to see the real-time effect of visual recognition.

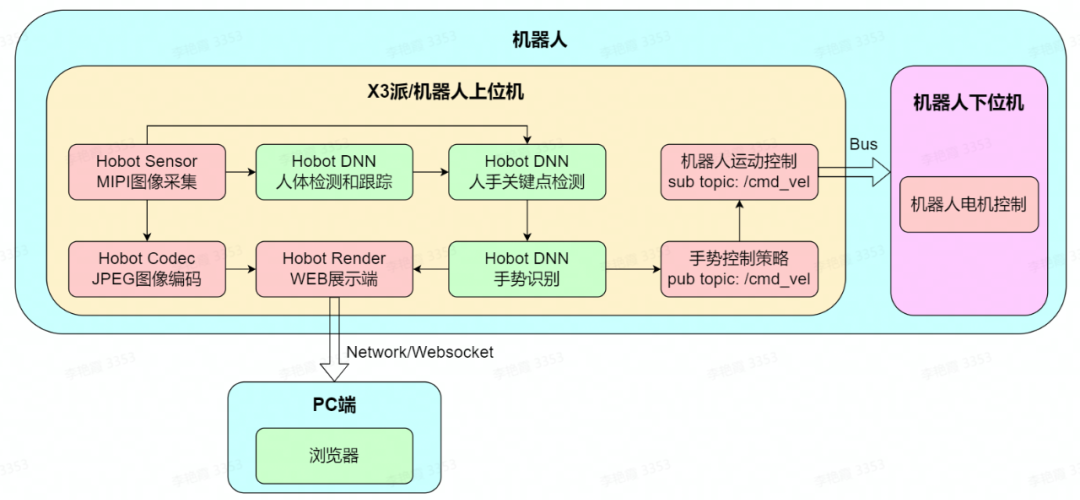

Introduction to the principle

The gesture control function is to control the movement of the robot OriginBot through gestures, including left and right rotation and forward and backward translational movement, which is composed of MIPI image acquisition, human body detection and tracking, human hand key point detection, gesture recognition, gesture control strategy, image encoding and decoding, and WEB display, and the process is as follows:

For a detailed explanation of the principle, please see:

https://developer.horizon.cc/college/detail/id=98129467158916314