OriginBot User Guide

Let's embark on the journey of intelligent robot development together!

Info

In the following operation process, you need to have some basic knowledge of robot development, and it is recommended to do a self-test through the following questions:

- What is Linux? What is Ubuntu? How do you start a command line terminal? What do the commands cd, ls, and sudo mean?

- What is SSH? What are the commonly used SSH software in Windows and Ubuntu? And how to use it?

- What is ROS/ROS2? What are the core concepts? How do you install and use it? How do you compile a workspace and set environmental variables?

If you can answer the above questions, please continue with the following content; On the contrary, it is recommended that you do not rush to run the robot first, and use 3~5 days to figure out the above questions (the answers can be found by using search tools or view reference materialsto find answers),which can make us better start the development of follow-up robots.

1. Choose the right kit

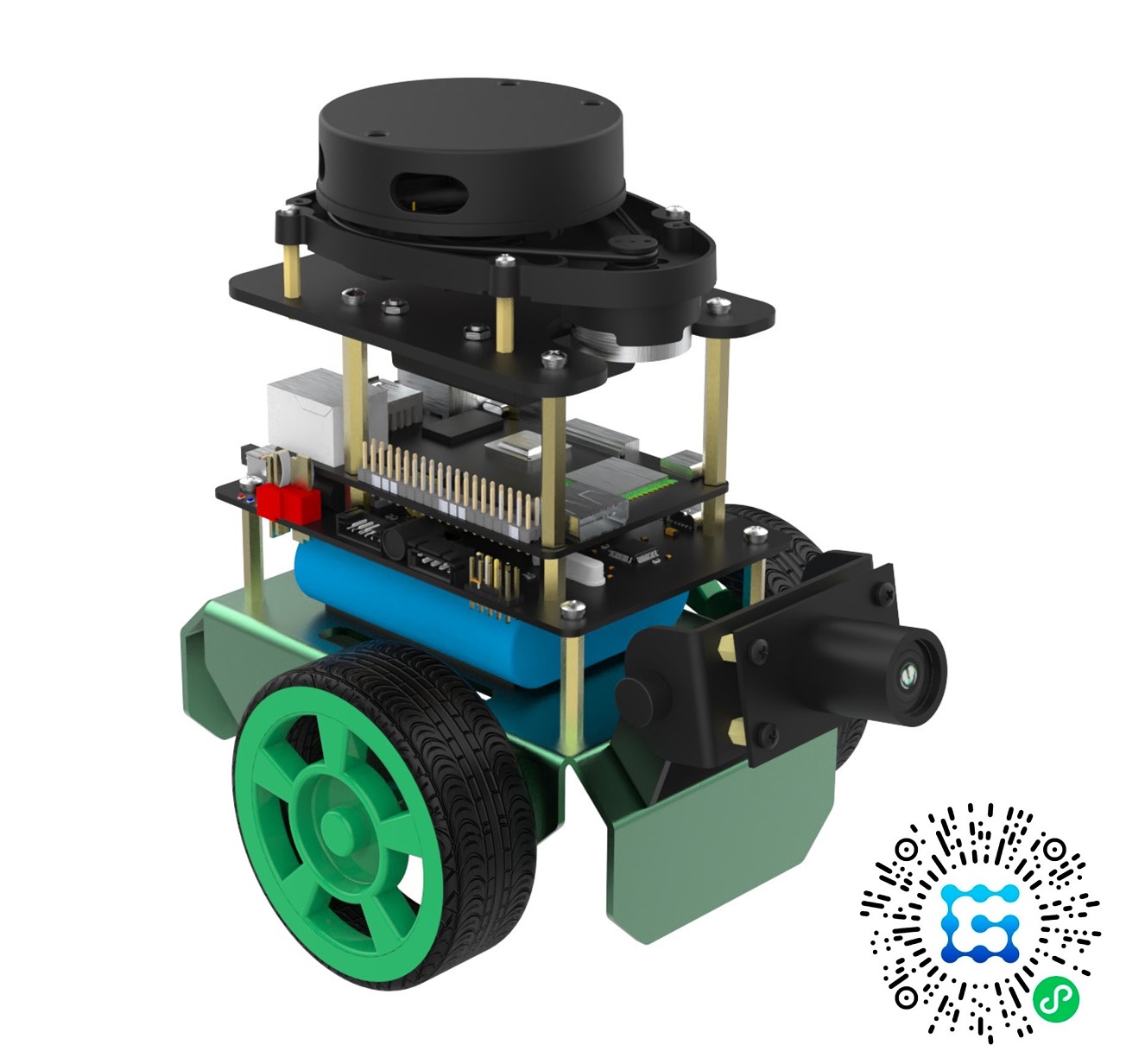

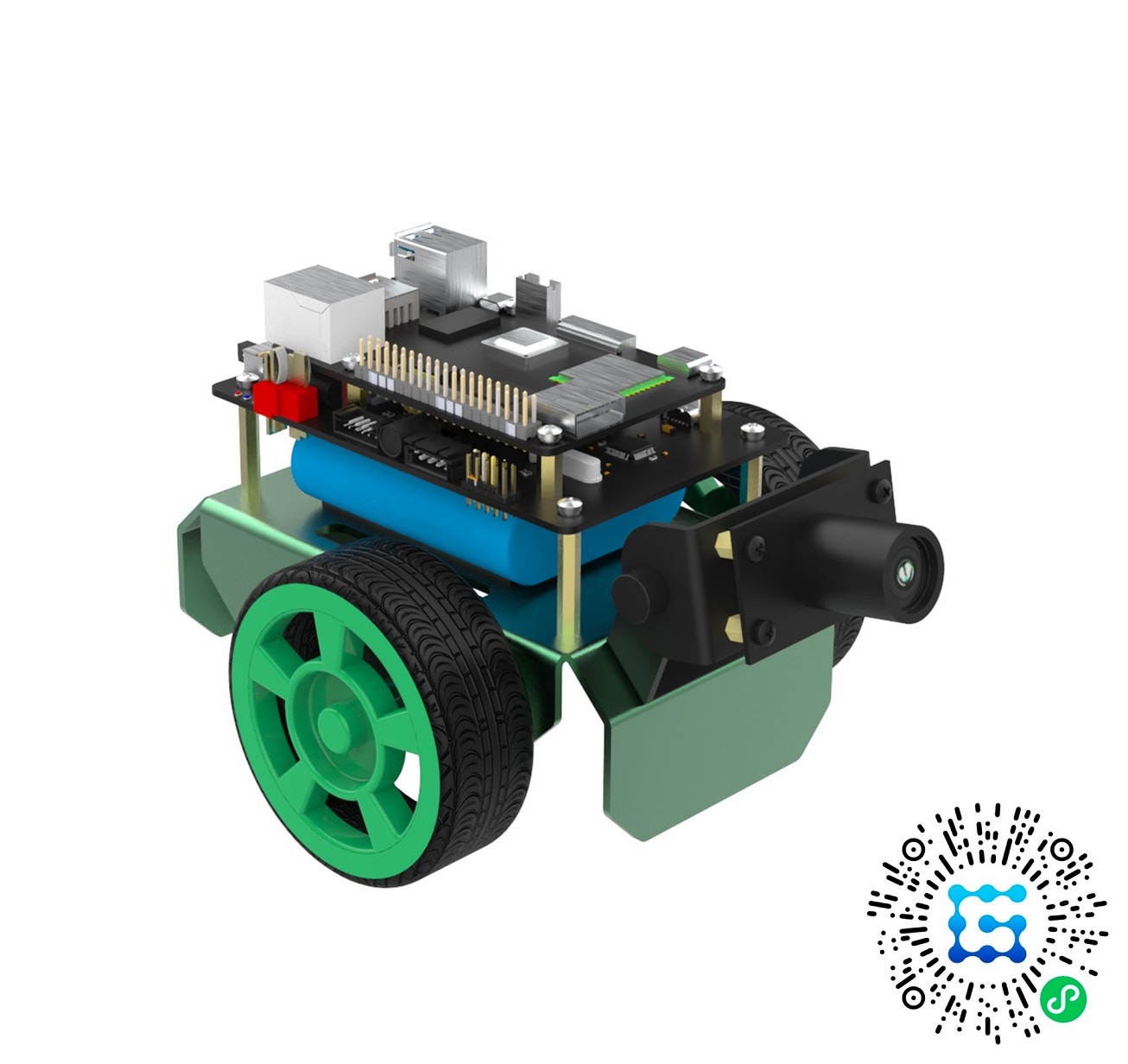

OriginBot is available in both visual versionandnavigation version. Both versions use RDK X3 as the core processor, providing 5Tops surging computing power and a 400W high-definition camera, which can realize diversified vision applications.

On the basis of the visual version, the navigation version is additionally equipped with IMU attitude sensors, lidars and personalized accessories, which can further develop robot SLAM map construction and autonomous navigation applications, and meet the development needs of intelligent robots in an all-round way.

| Navigation version | Visual version |

|---|---|

|

|

| Includes encoder, camera,IMU, lidar, lidar stickers | Includes encoder and camera |

For a detailed bill of materials for the OriginBot kit, please refer to: OriginBot Kit List

2. Assemble OriginBot

Refer to theKit assemblyor the instructions provided in the kit to complete the assembly of OriginBot.

Hint

The robot assembly is expected to take 30 to 60 minutes, which not only helps us understand the composition of the robot, but also makes the OriginBot unique.

3. Burn the Image and Firmware

After the kit is assembled, the "body" of OriginBot is there, and then we inject the "soul" into it.

(1) Refer to the "System Installation and Backup" step to Burn the OriginBot SD Card Image to complete the burning of the RDK X3 (Sunrise X3 Pi) image;

(2) Refer to the Burn the Controller Firmware in the "Controller Firmware Installation" step to complete the flashing of the controller firmware;

Attention

OriginBot does not flash the SD card image and controller firmware from the factory, please be sure to refer to the above instructions to complete the flashing, otherwise it will affect the operation of subsequent functions.

4. Configure the Computer Environment

In order to facilitate the monitoring of the robot, we need to make the following configurations for remote control on the computer side:

(1)Refer to Ubuntu System Installation, install the system environment on the local computer, and it is recommended to install Ubuntu20.04 or Ubuntu22.04;

(2)Refer to ROS2 System Installation, install ROS2 on the Ubuntu system installed in the previous step, and ROS2 Foxy or ROS2 Humble is recommended.

(3)Refer to downloading/compiling PC function Package, and complete the compilation of OriginBot-related function packages on the computer, which is mainly used for the visualization display and simulation of the future host computer.

5. Run the Quick Start Sample

Now everyone is ready to get OriginBot moving.

Refer to the quick start to complete the first operation of OriginBot, and remotely control the robot to move on the ground.

6. Run the Robot Function

OriginBot comes with a number of sample programs, so that every developer can fully understand the development method of intelligent robots, you can find detailed operation methods in the following sections:

Tip

The basic environment for the development and operation of OriginBot is ROS2, and it is recommended that you learn the basic knowledge of ROS2 in advance, which can be referred to the teaching courses。

Basic Use

This section describes how to use the basic functions of OriginBot:

| function | description | difficulty |

|---|---|---|

| Set up a development environment | Instructions on setting up a remote debugging environment in vscode | Beginner |

| Code development methodology | Methods for modifying and compiling function packages | Beginner |

| Robot startup and parameter configuration | Instructions on starting up the OriginBot chassis and sensors | Beginner |

| Robot remote control and visualization | Controlling the robot's forward, backward, left, and right movements using a keyboard or joystick | Beginner |

| Camera driver and visualization | Visualizing camera image data | Beginner |

| lidar drive and visualization | Visualizing lidar laser data | Beginner |

| IMU driver and visualization | Visualizing IMU data | Beginner |

| Dynamic monitoring of robot parameters | Viewing robot status such as voltage, peripherals, temperature, and sending PID parameters to the motor from the upper computer | Beginner |

| Robot odometry calibration | Calibrating the robot's linear and angular velocities | Intermediate |

| Robot charging method | Instructions on charging the robot | Beginner |

| Communication protocol description | Description of the communication protocol between the controller and RDK X3 (Sunrise X3 Pi) | Intermediate |

| Upper computer control instructions | Instructions on communication between the upper computer and RDK X3 (Sunrise X3 Pi) | Advanced |

| RTOS Configuration | Configuring the controller with FreeRTOS | Advanced |

| EKF multi-sensor fusion | EKF multi-sensor positioning | Beginner |

Application Functions

Introduction to the operation methods of OriginBot's application functions:

| Function | Description | Difficulty |

|---|---|---|

| Basic functional programming | Programming examples for basic robot functions (obtaining robot status, controlling robot peripherals) | Intermediate |

| SLAM mapping | Cartographer mapping | Beginner |

| Autonomous navigation | Realizing autonomous positioning and navigation of the robot using navigation2+amcl | Beginner |

| Human body tracking | The robot dynamically recognizes human bodies and follows their movements | Beginner |

| Gesture control | The robot dynamically recognizes gestures and performs corresponding actions | Beginner |

| Visual Line Follower (OpenCV) | Visual line follower motion implemented with OpenCV | Intermediate |

| Visual Line Follower (AI Deep Learning) | Visual line follower function based on deep learning processes | Advanced |

| Gazebo Virtual Simulation | Running a 3D physical simulation environment for OriginBot on a PC | Intermediate |

| SLAM Mapping (Gazebo) | Mapping in a 3D physical simulation environment for OriginBot on a PC | Intermediate |

| Autonomous Navigation (Gazebo) | Autonomous navigation in a 3D physical simulation environment for OriginBot on a PC | Intermediate |

| Parking Spot Detection (AI Deep Learning) | The robot dynamically recognizes parking spots and drives into them | Beginner |

| Trajectory tracking | The robot follows a designated trajectory | Beginner |

| Kick the ball and shoot at goal | The robot dynamically recognizes a football and scores a goal | Advanced |

| Voice control | The robot dynamically recognizes voice commands and reacts accordingly | Advanced |

Application Function Demo

7. Study complementary courses

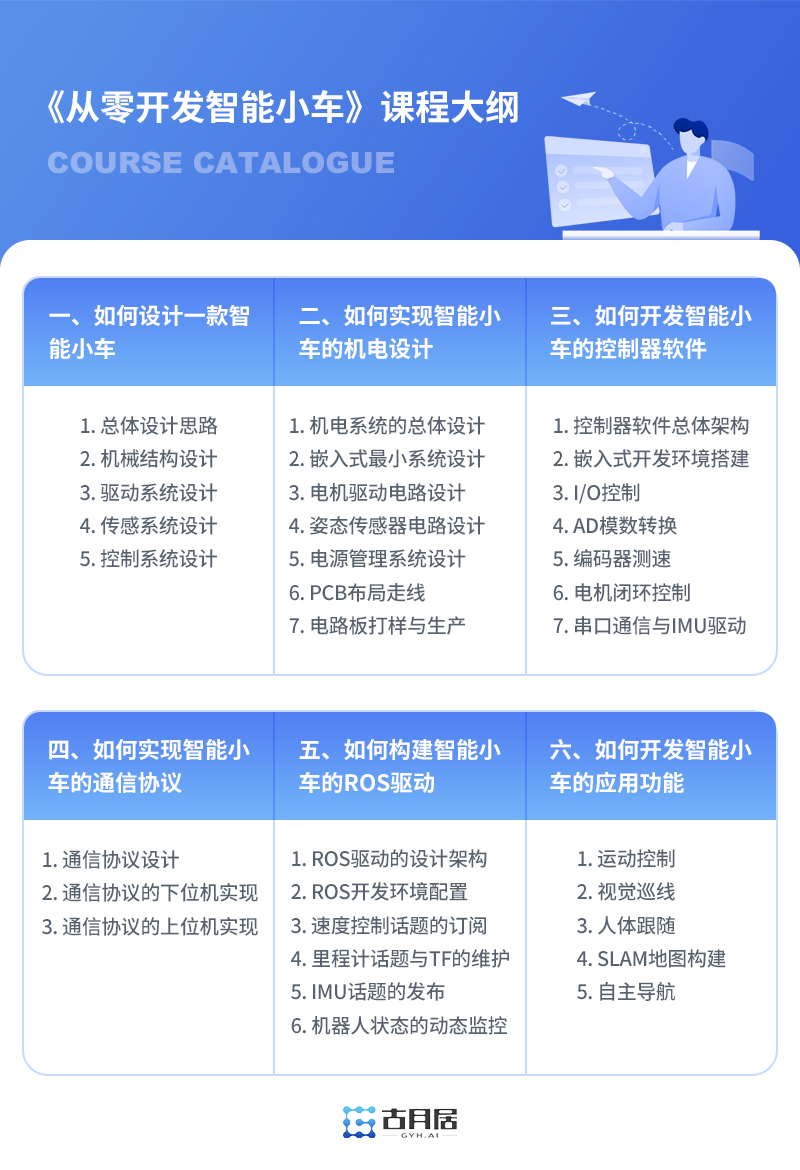

The series of courses "Developing an Intelligent Car from Scratch"can help you understand the detailed design and development process of OriginBot. You can redeem and learn using the exclusive course redemption card included in the kit, or other users can purchase and learn separately.

Exploring more possibilities

OriginBot is an open-source project jointly built by the community. Everyone is welcome to participate in its secondary development, making your OriginBot even more unique. We encourage all developers to refer to, learn from, provide feedback, and contribute to this project.

If you have come up with innovative ideas based on the OriginBot open-source project, please feel free to share them here!

Wishing you all a wonderful journey to robotics development! ☻